In an era where artificial intelligence (AI) is increasingly integrated into healthcare diagnostics, a series of high-profile failures has exposed critical gaps in automated medical analysis. These incidents, characterized by diagnostic misclassifications and system breakdowns, have prompted urgent calls from clinicians and technologists for stricter validation and oversight mechanisms.

The most recent failure occurred when an AI system designed to interpret medical imaging and diagnostic data encountered an unrecoverable uplink error, rendering it incapable of processing critical patient information. This event is not isolated; similar failures have been documented across multiple healthcare systems, raising concerns about the reliability of AI in high-stakes medical decision-making.

Why This Is Escalating

- Increased AI Adoption: The rapid integration of AI tools in radiology, pathology, and primary care has outpaced the development of comprehensive validation protocols.

- Data Integrity Issues: AI systems often rely on fragmented or incomplete datasets, leading to skewed diagnostic outputs.

- Regulatory Gaps: Current frameworks for AI in healthcare lack standardized benchmarks for accuracy, transparency, and accountability.

- Human-AI Disconnect: Over-reliance on AI without adequate clinician oversight has resulted in delayed or incorrect diagnoses.

Understanding the Condition

Diagnostic AI systems typically operate through a series of interconnected components:

- Data Ingestion: AI models ingest patient data, including imaging scans, lab results, and clinical histories.

- Pattern Recognition: Machine learning algorithms identify patterns and anomalies within the data.

- Diagnostic Output: The system generates a preliminary diagnosis or recommendation for clinician review.

- Validation Loop: Clinicians validate or override AI outputs, ensuring accuracy before finalizing treatment plans.

However, when any of these stages fail—such as during the uplink error—the entire diagnostic process collapses, leaving clinicians without critical decision-support tools. In some cases, these failures have led to:

- Misdiagnosis of life-threatening conditions (e.g., cancer, stroke).

- Delayed treatment due to system unavailability.

- Increased cognitive load on healthcare providers, who must manually verify AI outputs.

Industry Response and Solutions

In response to these failures, healthcare organizations and technology developers are exploring several mitigation strategies:

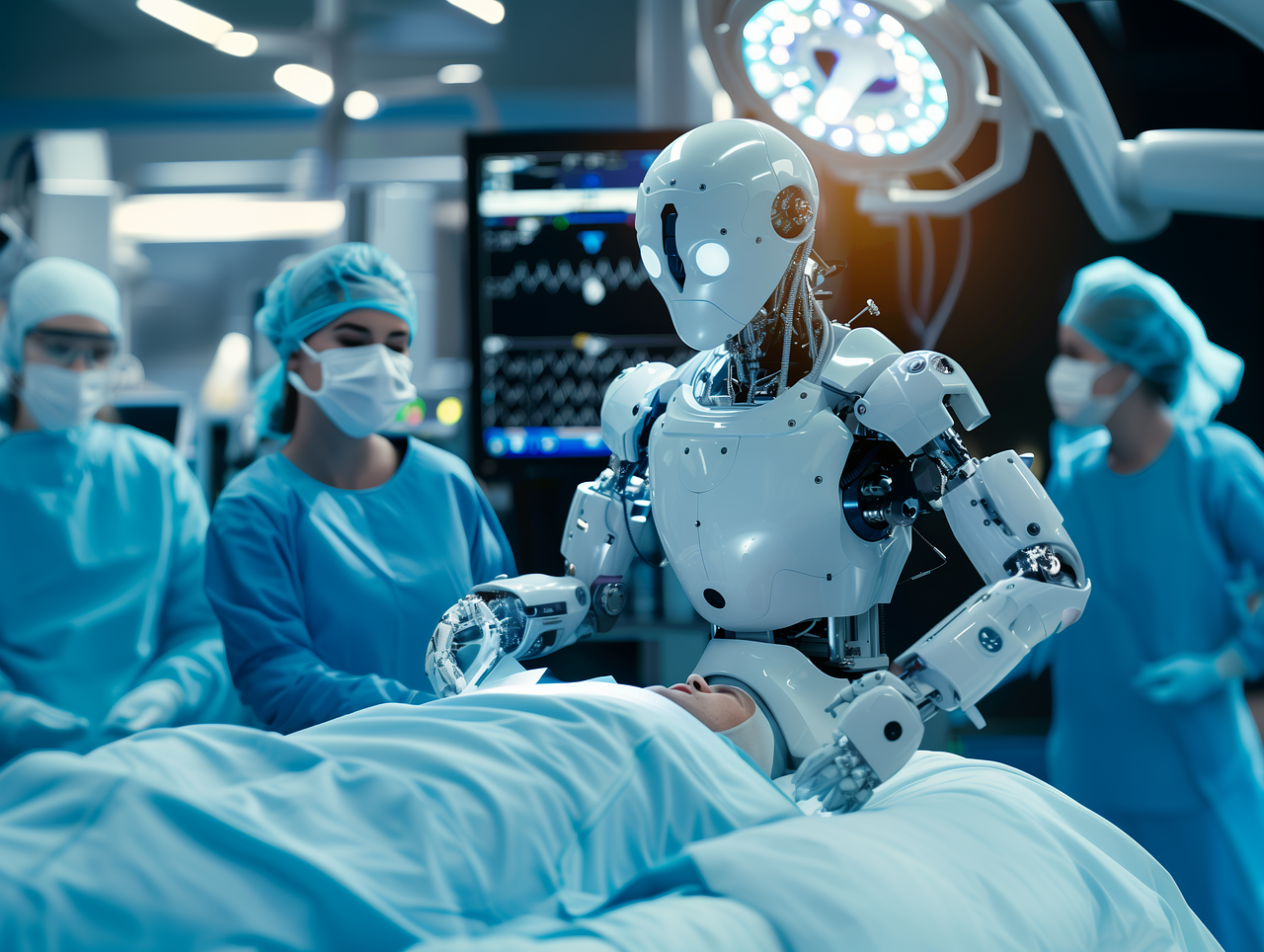

- Redundancy Systems: Implementing backup AI models or manual review processes to ensure continuity of care.

- Enhanced Validation Protocols: Developing standardized testing frameworks to assess AI performance across diverse patient populations and clinical scenarios.

- Human-in-the-Loop (HITL) Models: Requiring clinician approval for all AI-generated diagnoses to reduce over-reliance on automated systems.

- Transparency Initiatives: Publishing AI decision-making processes to improve trust and accountability among healthcare providers.

Leading institutions, including the World Health Organization (WHO) and the U.S. Food and Drug Administration (FDA), have issued draft guidelines emphasizing the need for rigorous pre-market testing and post-market surveillance of AI diagnostic tools. These guidelines aim to address the black-box nature of many AI systems, where the reasoning behind diagnostic outputs remains opaque to clinicians.

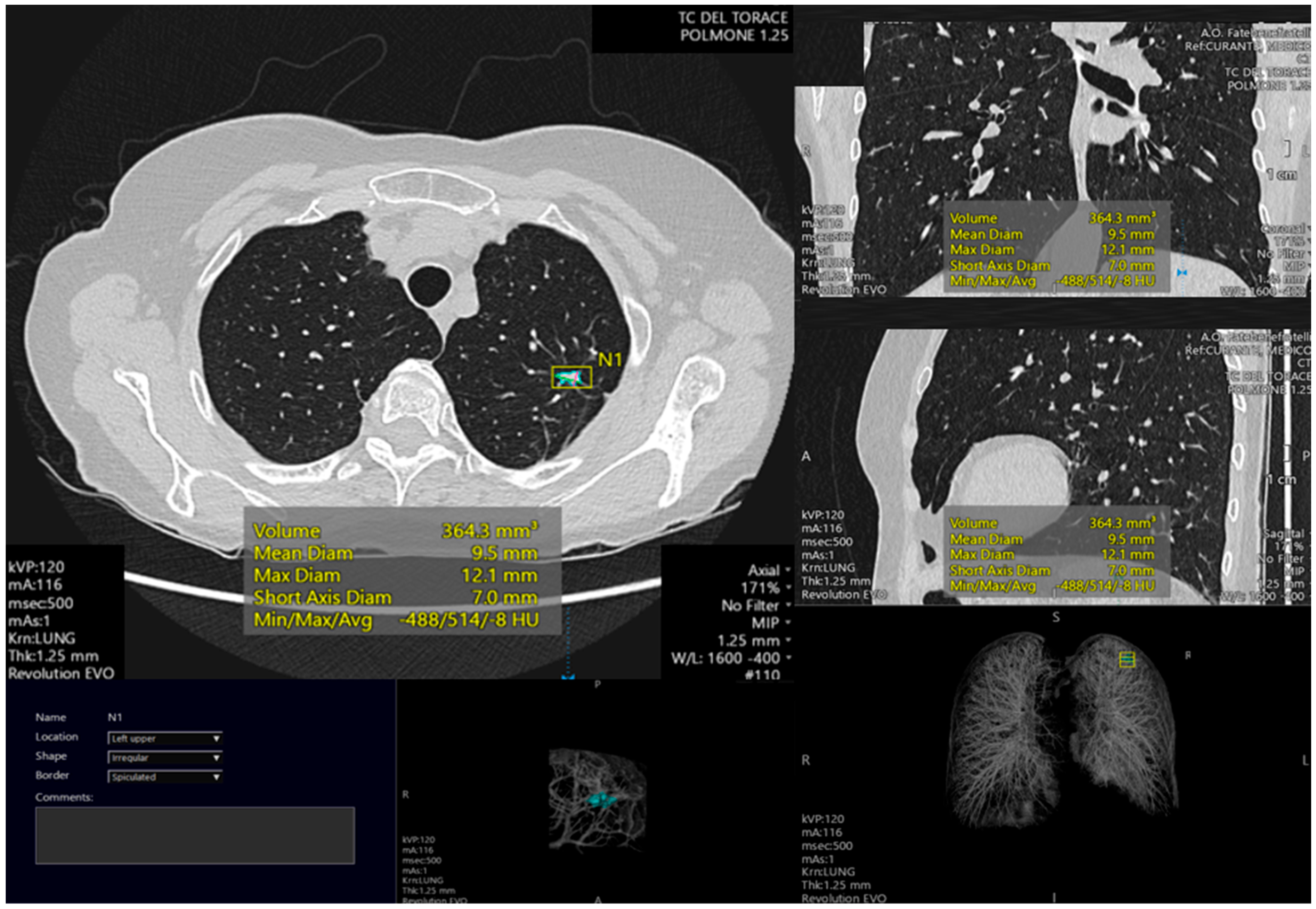

Case Study: The Radiology Collapse

A recent incident at a major urban hospital demonstrated the real-world consequences of AI diagnostic failures. During a routine radiology screening, an AI system designed to detect lung nodules failed to process a CT scan due to an uplink error. The system’s fallback mechanism, which was supposed to trigger a manual review, malfunctioned, resulting in:

- A 48-hour delay in diagnosing a patient with early-stage lung cancer.

- Increased radiation exposure for the patient, who underwent additional scans to compensate for the missed diagnosis.

- Heightened scrutiny of the hospital’s AI integration strategy, with calls for a temporary moratorium on automated diagnostics until systems could be stabilized.

This case highlights the fragility of AI-dependent workflows and the need for fail-safe mechanisms that prioritize patient safety over technological convenience.

Expert Perspectives

Dr. Elena Vasquez, a radiologist and AI ethics researcher at Stanford University, emphasized the importance of balancing innovation with caution: "AI has the potential to revolutionize diagnostics, but only if we address its limitations head-on. The current failures are not a reflection of AI’s inherent capabilities but rather a symptom of rushed implementation and insufficient safeguards."

Similarly, Dr. Raj Patel, a data scientist at MIT, noted: "The uplink error is a wake-up call. We need to treat AI like a medical device—subject to the same rigorous standards as any other tool in a clinician’s arsenal."

The Path Forward

To prevent future diagnostic failures, stakeholders across the healthcare ecosystem must collaborate on the following priorities:

- Standardized Benchmarks: Establishing universal accuracy thresholds for AI diagnostic tools, with penalties for systems that fail to meet them.

- Interoperability: Ensuring AI systems can seamlessly integrate with existing electronic health records (EHRs) and clinical workflows.

- Continuous Monitoring: Implementing real-time auditing of AI performance to detect and correct errors before they impact patient care.

- Education and Training: Providing clinicians with comprehensive training on AI limitations, ethical considerations, and best practices for collaboration with automated systems.

The integration of AI into healthcare is inevitable, but its success hinges on our ability to learn from these failures and build systems that are transparent, accountable, and resilient.

MedSense InsightThe diagnostic failures plaguing AI systems today are not merely technical glitches—they are a symptom of a broader crisis in healthcare innovation. As AI becomes more deeply embedded in clinical practice, the stakes for patient safety have never been higher. The solution lies not in abandoning AI but in reimagining its role as a supplementary tool rather than a replacement for human expertise. Clinicians must remain the final arbiters of care, with AI serving as a decision-support mechanism that augments—not dictates—medical judgment. The future of AI in healthcare will be defined by our willingness to confront these challenges with humility, rigor, and an unwavering commitment to patient well-being.

- Key TakeawayAI diagnostic failures are escalating due to rushed implementation, data integrity issues, and regulatory gaps.

- Failures can lead to misdiagnosis, delayed treatment, and increased clinician burden.

- Solutions include redundancy systems, enhanced validation protocols, and human-in-the-loop models.

- Collaboration among healthcare providers, technologists, and regulators is essential to ensure AI tools are safe, transparent, and effective.

- The path forward requires a balanced approach that prioritizes patient safety while embracing technological innovation.

DISCUSSION (0)

POST A COMMENT