Paul Boyer, a psychotherapist at Kaiser Permanente in Oakland, California, has front-row seats to the AI revolution sweeping through healthcare. But his experience with the latest innovation—a note-taking software from Abridge designed to summarize patient visits in seconds—has left him underwhelmed. For many clinicians, this technology is a godsend, slashing the mountain of paperwork that has long choked their ability to focus on patients.

Yet beneath the surface of this technological marvel lies a dangerous gamble. As the Trump administration and figures like Robert F. Kennedy Jr. push to relax safeguards for AI-driven healthcare tools, experts are sounding the alarm. The rush to embrace AI in medicine is outpacing the safeguards needed to protect patients from errors, biases, and outright failures that could cost lives.

Why This Is Escalating

- Regulatory Rollback: The proposed changes aim to strip away critical oversight, allowing AI tools to be deployed without the rigorous validation required for medical devices.

- Clinician Skepticism: Despite the hype, many healthcare professionals remain unconvinced. Boyer’s lukewarm response reflects a broader sentiment—AI tools often fail to deliver on their promises, leaving clinicians to clean up the mess.

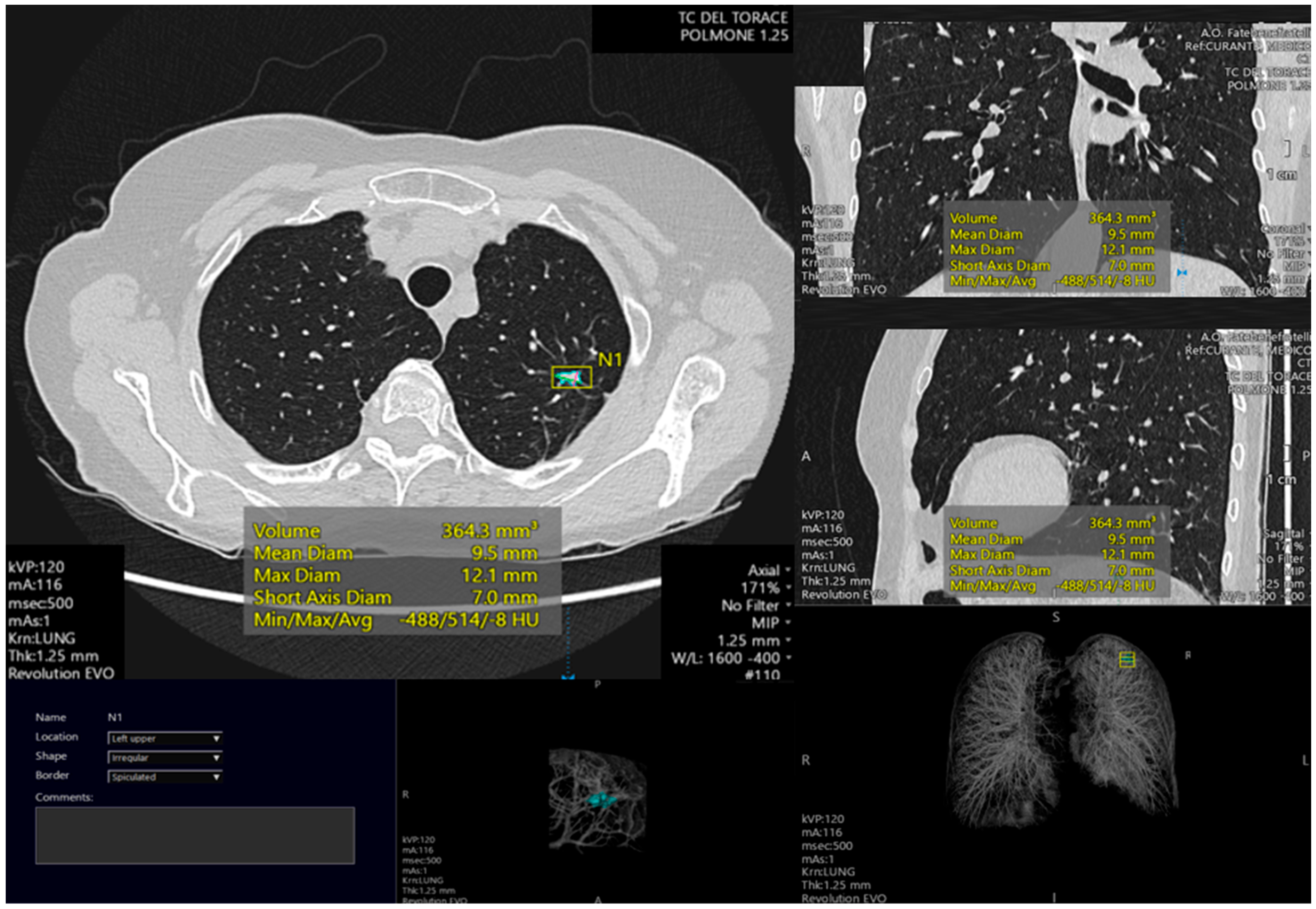

- Patient Safety at Risk: AI systems trained on flawed or biased data can produce catastrophic errors, from misdiagnoses to incorrect treatment recommendations. The consequences? Lives hanging in the balance.

What You Should Do Now

If you’re a patient, a clinician, or simply someone who values evidence-based medicine, here’s how to stay ahead of this unfolding crisis:

- Demand Transparency: Ask your healthcare provider whether AI tools are being used in your care—and if so, demand proof of their accuracy and safety.

- Advocate for Stronger Regulations: Support policies that prioritize patient safety over corporate convenience. Relaxed safeguards could turn AI into a ticking time bomb in healthcare.

- Stay Informed: Follow updates from reputable medical organizations and watch for studies that expose the real-world performance of AI tools in clinical settings.

Understanding the Risk

AI in healthcare isn’t inherently bad—but its unchecked expansion is a recipe for disaster. The technology’s potential is undeniable: faster diagnoses, streamlined workflows, and even early detection of diseases. However, the risks are equally stark:

- Data Bias: AI systems trained on non-diverse datasets can produce skewed results, disproportionately affecting marginalized communities.

- Over-Reliance: Clinicians may defer to AI recommendations without critical evaluation, leading to errors that no machine can correct.

- Accountability Vacuum: Who is responsible when an AI tool fails? Current regulations leave patients and providers in legal limbo.

The push to relax AI healthcare regulations isn’t just a policy debate—it’s a public health emergency waiting to happen.

MedSense Insight

The AI revolution in healthcare is inevitable, but its success hinges on one critical factor: trust. Without robust safeguards, trust will erode, and the technology’s potential will be squandered. The question isn’t whether AI will transform medicine—it’s whether we’ll let it do so safely.

Key Takeaway

AI tools in healthcare hold immense promise, but their unchecked deployment under relaxed regulations could unleash a wave of preventable medical errors. Patients and clinicians must demand accountability, transparency, and rigorous validation to ensure these technologies serve as life-saving allies—not silent killers.

DISCUSSION (0)

POST A COMMENT